Cocoon

March 18, 2026

—

Axios

Great Sky

March 13, 2026

—

Semafor

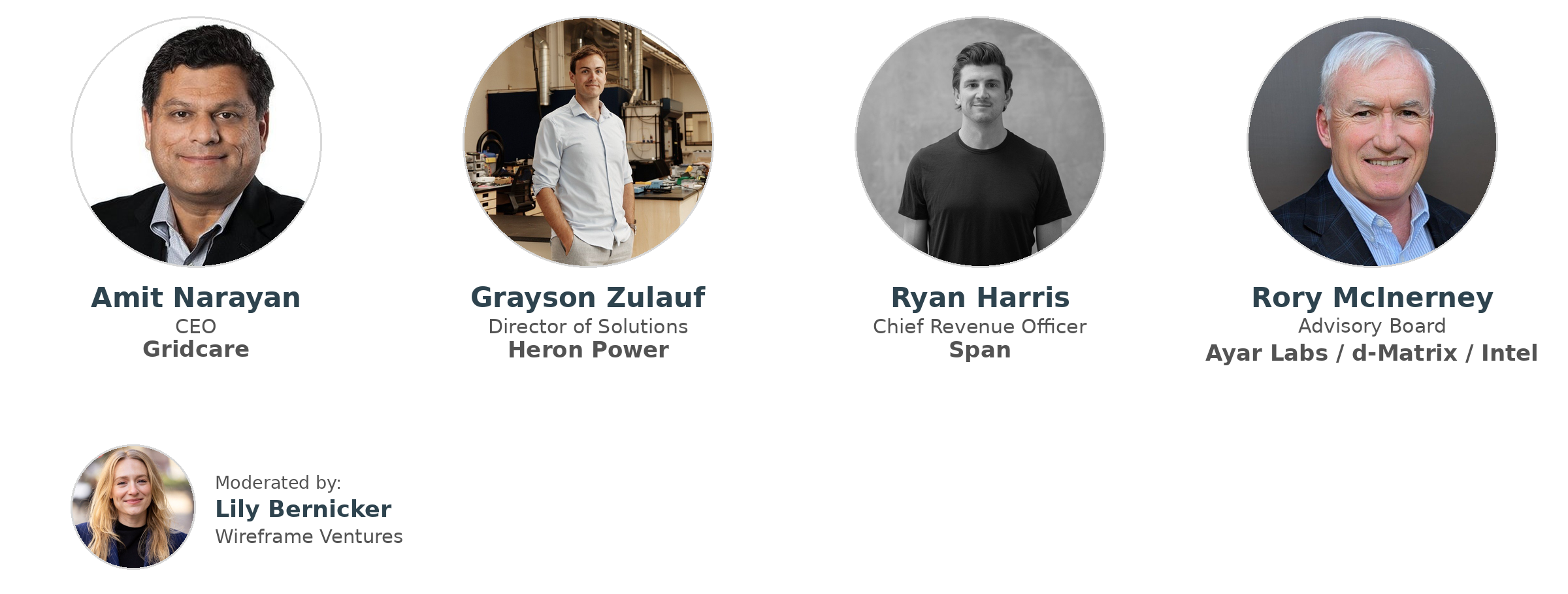

2025 Climate Investor Survey

September 23, 2025

—

Wireframe

Enveda Biosciences

September 4, 2025

—

Fierce Biotech

Our 2024 Impact Report

September 17, 2024

—

Wireframe

LevelTen Energy

July 16, 2024

—

MSN

Xcimer Energy

June 4, 2024

—

Axios